Hi,

I have a requirement to download all the responses from a Typeform survey. I am currently using the requests library in Python to fetch these responses. I am currently using the 'before’ param to continuously fetch all the responses, but this is taking a lot of time when there is a lot of responses ( around 2 mins for 14000 responses, in batches of 1000.) Added my existing code below if that helps.

Is there any way to speed up this process by calling multiple response pages simultaneously, this would require knowing the exact token every 1000 tokens though I assume. Please let me know if this is the right way to go about it or is there some better way to fetch multiple pages of responses at once.

def get_responses_page(form_id, token, page_size, before=None):

url = f"https://api.typeform.com/forms/{form_id}/responses"

headers = {"Authorization": f"Bearer {token}"}

params = {"page_size": page_size}

if before:

params["before"] = before

response = requests.get(url, headers=headers, params=params)

response.raise_for_status()

return response.json()

def get_all_form_responses(form_id, token):

all_responses = []

page_size = 1000

before_token = None

call_counter = 0

total_items = 0

while True:

start_time = time.time()

response_json = get_responses_page(form_id, token, page_size, before_token)

total_items = response_json.get("total_items", 0)

page_count = response_json.get("page_count", -1)

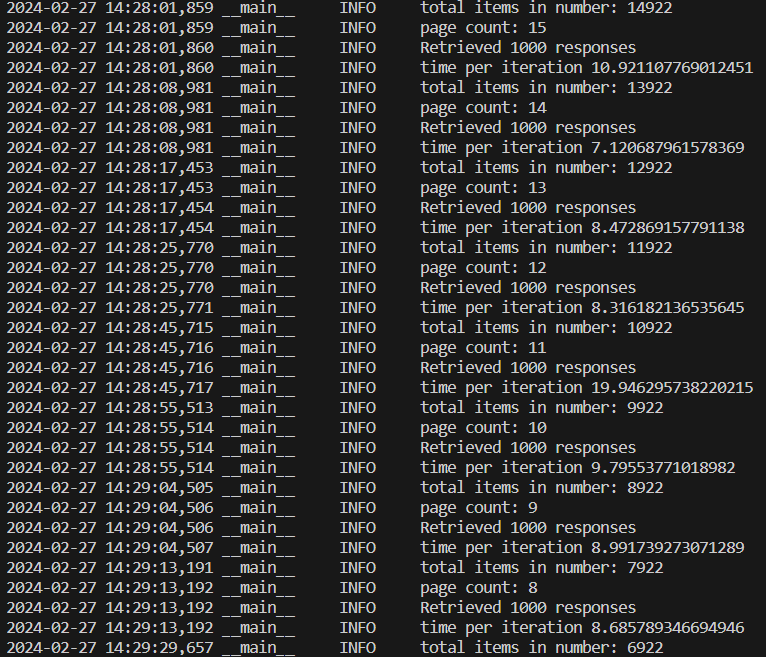

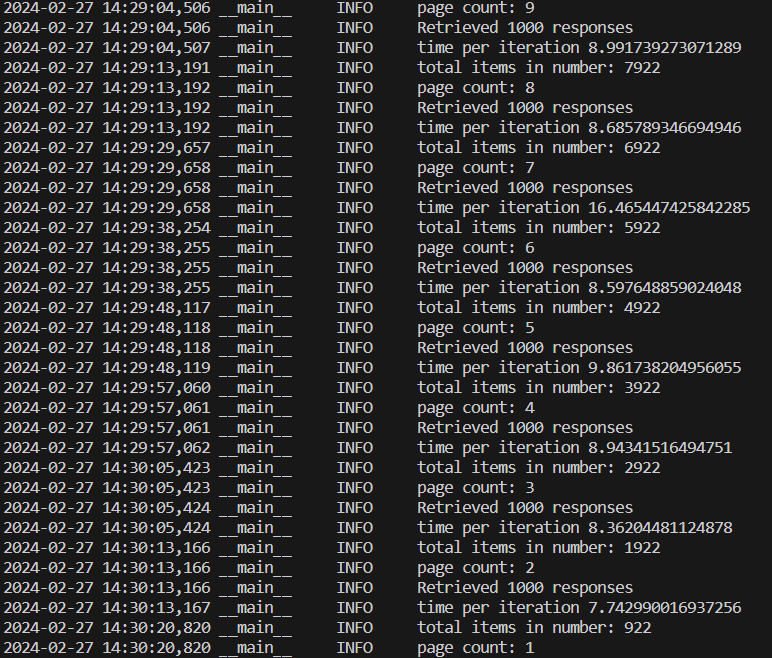

logger.info(f"total items in number: {total_items}")

logger.info(f"page count: {page_count}")

items = response_json.get("items", [])

before_token = items[-1].get("token", [])

all_responses.extend(items)

call_counter += 1

logger.info(f"Retrieved {len(items)} responses")

end_time = time.time()

logger.info(f"time per iteration {end_time-start_time}")

if page_count == 1:

break # Exit loop if there are no more pages

elif page_count == -1:

logger.info("unable to find page_count")

logger.info(f"call counter: {call_counter}")

return all_responses